New Car Safety Technology Could Cause Confusion, Accidents if Drivers Aren't Trained

Image credits: Zapp2Photo/Shutterstock

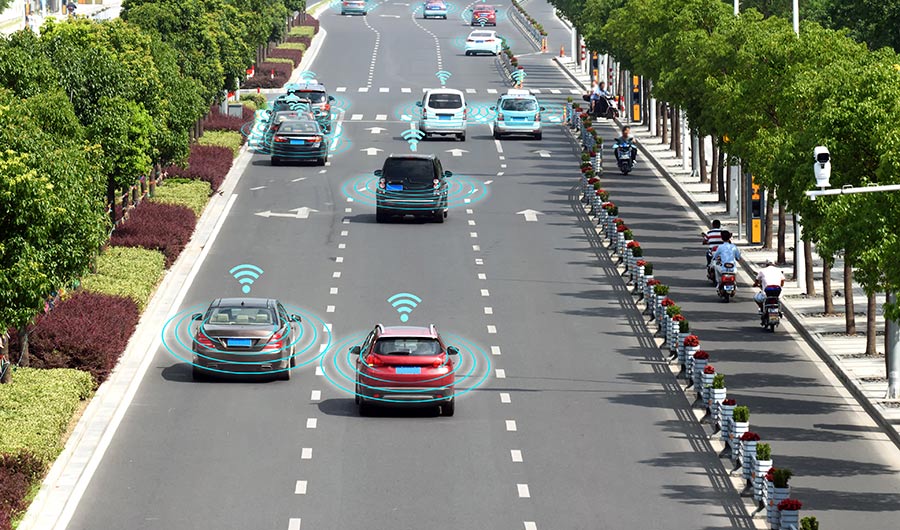

(Inside Science) -- Automakers are putting safety technology into new cars that drivers may not understand or respond to -- and that could be a problem, according to researchers who study how humans make decisions and interact with machines.

Automated assistance technology in automobiles, such as automatic emergency braking and systems that keep cars from straying out of a highway lane, parallels the advent of autopilots in aircraft in the 20th century.

As was true for aircraft pilots decades ago, drivers need to learn how the emerging technology works and its limitations if driving is to become as safe as flying, said Edwin Hutchins, professor of cognitive sciences at the University of California San Diego.

Researchers are just now beginning to study how drivers interact with automated systems in cars, said Hutchins. He and NASA scientist Stephen Casner published a long discussion of the issues in March in the Journal of Cognitive Engineering and Decision Making.

They wrote that while automation helped prevent airplanes from flying into mountains or crashing into other planes -- accidents we know could happen -- it also helped produce unique accidents we didn’t know could happen. This includes the recent crashes of Boeing 737 Max airliners operated by Lion Air and Ethiopian Airlines.

The computers on board the 737s were receiving erroneous information and over-ruled the pilots who had been fighting to take control. An anti-stalling mechanism, the Maneuvering Characteristics Augmentation System, apparently malfunctioned on both planes and the pilots weren’t trained for that possibility. In part, the crash highlights a philosophical dispute between Boeing and Airbus over who or what should control the plane. Boeing says the pilot; Airbus says the flight computers. In this case, the pilots were supposed to be able to take control, but they didn't know they could overrule the computers -- or how to do it. The computer kept pushing the nose down and the planes crashed, killing 346.

Similarly, drivers in cars with automated assistance technology often don’t fully understand how the systems work and how to properly use them, sometimes with fatal results.

It takes time and effort to become familiar with new automotive technologies, said Bryan Reimer, a research scientist at MIT's AgeLab. This can make it difficult to switch vehicles, especially when renting cars.

The profusion of devices could potentially confuse many. The new devices can help keep the car in the proper lane and watch for cars in blind spots or coming from a side street. They may prevent rear-ending the car in front or stop drivers from backing into a car or pedestrian behind them. Some cars park themselves. If a driver puts on the adaptive cruise control and tells their car to stay 50 feet from the rear of the next car, the computer will try to keep their car 50 feet behind no matter what the other car does.

Half the vehicles manufactured, including relatively inexpensive ones, are now semi-automated cars. Automatic Emergency Braking will be standard in new cars in the U.S. by 2022.

Often the technology is meant to help drivers avoid accidents, but many drivers do not make the distinction between different levels of automation. One survey showed that 11 percent of drivers thought they would be able to read or use their phone while the computers drove the car, confusing completely autonomous vehicles with conventional cars with added technology.

The driver should always be ready to take control. If there is a stopped car ahead that the previous car just swerved to avoid, the automation may not be able to make the decision fast enough.

Similar limitations occur when rounding curves in which the adaptive cruise control can suddenly begin tracking a car ahead in a different lane rather than the car ahead in the same lane.

People or objects of a small size, particularly children, may not show up on rear-facing cameras.

Different companies also use different technologies, different alarms and different controls.

Drivers entering a new or rented car may not understand how that technology works or its limits. Rental car companies don’t have the people or the time to show customers how the new gadgets work. This puts drivers in the same underprepared position as the 737 pilots. When an alarm goes off or an annunciator declares a problem, do they know what to do?

The solution, Hutchins said, is to educate drivers.

Researchers, car companies, insurance agencies, government agencies and law firms are beginning to meet to discuss the issue but there are no current plans to provide drivers with the information they need, said Hutchins. His paper listed more than two dozen things that should be taught to improve safety, including making it easy to tell which functions are on or off and exactly which function does what.

Simply adding pages to the owner’s manuals won’t do the job, as airlines found out.

The owner’s manual for an Airbus A320 airliner is 2,700 pages on an iPad, little use when you stall at 30,000 feet or merge onto the New Jersey Turnpike.