The Math Of Brewing Coffee Can Model Anesthesia

(Inside Science) -- Mathematics that can describe coffeepots, forest fires and flu outbreaks may also underpin the brain's response to anesthesia, a new study suggests.

The mathematical model of the brain, published in Physical Review Letters, marks the latest attempt to simulate the surprisingly complicated effects of general anesthetics across the brain. Despite modern medicine’s 160-year use of ether, laughing gas and propofol in surgery, researchers still don’t know how exactly they tamp down the back-and-forth between the thalamus -- the brain's hub for sensory information -- and the cortex, the wrinkly outer layer and seat of consciousness.

"It's a medical wonder that we really don’t know the molecular mechanism," said Yan Xu, the vice chairman for basic sciences at the University of Pittsburgh School of Medicine in Pennsylvania, and the senior author of the study.

Researchers can track the amount of activity in the cortex by measuring brain waves, the rhythmic electrical crackles in the brain's outermost nerve cells. A dose of anesthetics caused brain waves to predictably drop off, as activity unsurprisingly ebbs. But how do anesthetics -- which act on individual nerves -- slow down brain waves as a whole?

This isn't an easy question. It's a bit like asking how millions of leaderless ants coalesce and build an anthill. So researchers tried mathematically modeling these patterns in an effort to understand what might be going on. Xu, his student David Zhou and their collaborators started by building a mathematically generic "brain" with a branch of mathematics called percolation theory, which can be used to model everything from sponges’ porosity to flu outbreaks.

In essence, the theory assembles a large grid and then lays down some rules about how things move through the grid. In a coffeepot modeled with percolation theory, for example, the coffee grounds constitute a 3-D lattice, and a point in this lattice can be occupied by one of two things: empty space or a solid coffee ground.

In order to brew a cup of joe, water needs to weave its way through the grounds through a continuous path of empty space in the grid. But the odds of this path existing change with how loosely or tightly the coffee grounds are packed. At some happy medium, the coffee grounds just barely let water drip through, sending delicious coffee into the pot below. The mixture of coffee grounds and empty space delicately straddles the gap between no dripping and too much dripping, demarcating a "phase transition" much like that which occurs in liquid water on the cusp of boiling into vapor.

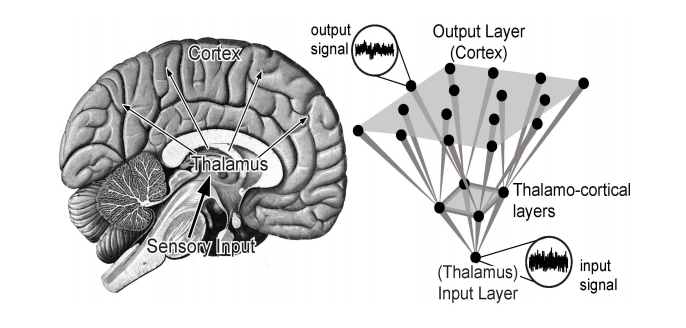

For their percolation model, Zhou and Xu mathematically built a grid of "nerve cells" wired together in layers roughly resembling the structures of the thalamus and cortex. Each "nerve cell" could send signals to others; "remember" the effects of prior signals it had received; and randomly fail to fire.

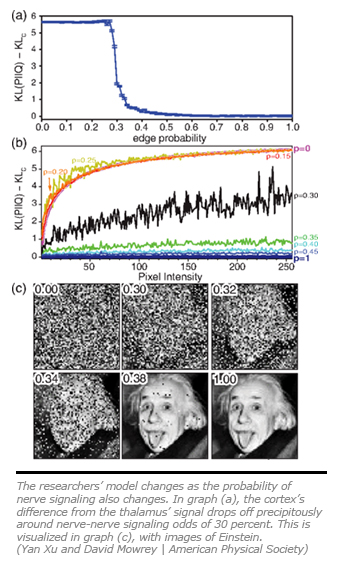

The researchers then changed the odds that a given nerve would send a signal to another, a parameter akin to the coffee grounds' packing density. Lowering the odds was like giving their "brain" a dose of anesthesia; no matter the specifics, anesthetics generally diminish nerve-nerve signaling. When the researchers fed a randomly generated signal into the model’s first layer of "nerve cells" -- their stand-in for the thalamus -- they found that they could replicate the different brain waves in their outermost layer of "cortex," depending on how they set the odds.

When the chances for nerve-nerve signaling were high, the cortex and thalamus were largely in sync, crackling with activity comparable to beta waves, the brain waves associated with wakefulness. But when the signaling odds soured, their model's brain waves grew sluggish, eventually mimicking the delta waves of deep sleep. Particularly low odds of signaling yielded silence in the cortex, punctuated by brief spurts of activity -- a phenomenon called "burst suppression" that human brains exhibit under deep anesthesia.

But was there a critical point where the flow of information through their model rapidly improved, akin to finding the perfectly packed coffee? The researchers also found that the model was surprisingly sensitive at specific odds. Give the model's primed "nerves" a 32 percent chance of signaling their neighbors, quite a bit of information makes it intact from the "thalamus" to the "cortex." Lowering those odds by a mere 2 percent, however, massively worsens the information transfer from the thalamus to the cortex -- a feat the researchers illustrated by spoon-feeding their model an image of Albert Einstein. While the change doesn’t fit the exact math for a "phase transition," it does line up with how humans respond to anesthetics: slightly at first, and then much more once a certain concentration is reached.

The theoretical model backs up experimental evidence from 2010 of percolation in living neural networks. And while the researchers and outside experts are loath to overstate the model's scope, the study suggests that consciousness -- or wakefulness -- depends on high-quality information transfer between the thalamus and cortex, in line with other theories.

But outside experts caution that the model is quite abstract, thus muddying its relationship to the actual brain. "The link to neurophysiology is a little bit tenuous," said Moira Steyn-Ross, a physicist at the University of Waikato in New Zealand. She wasn't involved with the study. "They have the layers, they can describe various behaviors, but how exactly does that relate to anesthetic action?"

Part of the problem, said Michael Hawrylycz, an investigator at the Allen Institute for Brain Science in Seattle, Washington, is that "we don’t know enough about the detailed circuitry of the brain ... to really ascertain the realism of models" like Zhou and Xu's, he said.

But Xu brushes aside concerns that his model is too generic to be relevant, noting that for large-scale phenomena, the small details actually might not matter.

"If you want to study the Earth’s movement," he said, "the issue of Golden Gate Bridge becomes irrelevant."

Michael Greshko is a science writer based in Washington, D.C., who has written for NOVA Next, the National Academies, and NYTimes.com, among other outlets. He tweets at @michaelgreshko.