Computer Science Technique Helps Astronomers Explore the Universe

(Inside Science) -- Google uses "deep learning" to generate captions for images, Facebook uses it to recognize faces and Tesla uses it to train self-driving cars. Now astronomers have caught on to deep learning, a form of machine learning in which a computer can be trained to identify or classify particular objects in images.

The newest telescopes, such as the Dark Energy Survey, which uses a 4-meter telescope in northern Chile and covers about one quarter of the southern sky, take millions of images of a variety of celestial objects. These often include visual distortions, cosmic rays and satellite trails that make them difficult to interpret. Deep learning could help process this deluge of data quickly.

“Astronomy is the next frontier to take it on,” said Brian Nord, an astrophysicist at Fermilab in Batavia, Illinois.

Nord is one of a group of astrophysicists who search for rare gravitational lenses with signs of curved slivers of light or duplicated images that indicate the presence of massive objects skewing light rays. The scientists often have to sift through numerous images by eye, one at a time. But now they have a potentially game-changing technique at their disposal.

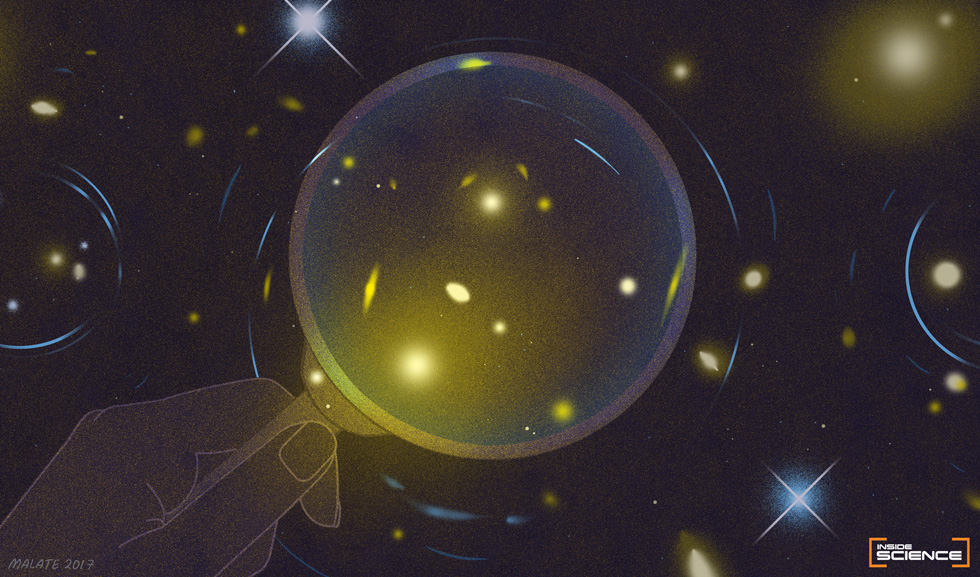

Massive objects in space -- like clusters of galaxies combined with dark matter hidden from view -- distort the light we see from faraway galaxies and quasars, deflecting and warping the light rays around them. Distant galaxies look like magnified arcs, as if seen through the edge of a cosmic magnifying glass.

Scientists have found hundreds of such lenses so far, confirming predictions of general relativity theory by Albert Einstein and others in the 1930s. With newly developed deep learning tools, astronomers expect to find at least 2,000 more with the Dark Energy Survey, according to research presented by Nord at the American Astronomical Society meeting in Grapevine, Texas on Jan. 4. A big catalog of gravitational lenses would help astronomers learn more about the nature of dark matter and how it holds galaxies together.

Finding gravitational lenses is like finding needles in haystacks far away, when no two needles or haystacks are alike. “Deep learning is a way for us to create a model of a complicated system,” Nord said.

To make complex classifications, astronomers have long used statistical machine learning techniques like neural networks, which are programmed systems with layered nodes connected in a web, much like neurons in the human brain. Deep learning just involves more interconnected layers or steps in the computation, including “hidden” ones of increasing complexity as the algorithm proceeds from input to output.

For example, with facial recognition software, someone feeds in an image, and the system first detects edges, lines and curves. Intermediate layers then put together higher-level features, like eyes or a mouth, and eventually a face. For gravitational lenses, the software would gradually recognize a big galaxy surrounded by arcs, indicating lensed background objects.

After it’s been trained with many lens images, such an algorithm can then find new lenses in images it has never encountered before. The current state-of-the-art algorithms can correctly identify these lenses all but a few percent of the time, when they mistake a particularly messy image for the real thing.

“If it works but is 97 percent accurate, you could be vastly swamped by false positives,” said Colin Jacobs, who along with Karl Glazebrook at Swinburne University of Technology in Melbourne, Australia, is also working on the problem. “Ideally, it should be more accurate than what you’d need for computer vision or facial recognition,” he added.

To address this challenge, Nord and Jacobs and their colleagues could design the algorithm to be strict, ensuring that it finds the cream of the crop, the clearest lenses in a survey. But this risks missing many lenses. Alternatively, they are trying to be more lenient in their search criteria, knowing it would mean later weeding out by hand some images that happen to look a bit like lenses.

Over the past couple years, astronomers have begun to apply deep learning in other areas as well, mostly for deciphering images in other ways. They have used the techniques to distinguish between distant galaxies and stars in the Milky Way, to estimate the distance to faraway objects and to categorize the structures of galaxies, which can take on a variety of spiral and elliptical shapes.

Others have utilized citizen science, recruiting people around the world to help sort through images. A project called Galaxy Zoo, for example, has classified the structures of hundreds of thousands of galaxies, while another, called Space Warps, has discovered dozens of candidate gravitational lenses missed by others.

Nord applauds these efforts, but if his software works as well as advertised, “deep learning has the potential to be much faster,” he said.