How Conscious Are You?

Illustration of neural synapses inside the human brain

Edited from image by Day Donaldson via Flickr

(Inside Science) -- What is consciousness? For centuries, philosophers, scientists, and writers have pondered the question. The concept or even the word itself is difficult to define, and because of this it is one of the most difficult subjects to study scientifically.

One of the most common interpretations of consciousness is awareness or alertness, but even this is closely intertwined with other facets of consciousness such as self-awareness. While the metaphysical answer remains elusive, a group of psychologists from France has taken a slightly different approach -- is it possible to measure consciousness without completely understanding it?

Hailing from the aptly named Paris Descartes University, the researchers used the concept of entropy to explore the spectrum from unconsciousness to consciousness. In order to understand what this means, you first need to understand two things -- the complexity of the human brain, and the power of statistical mechanics.

"The most complex object in the universe"

The human brain is incredibly complex, and not only due to its physical complexity, but the permutable ways to combine neural synapses. Consider a deck of 52 playing cards -- there are more ways to arrange them than the product of 10 multiplied by itself 67 times. If every single person on earth shuffled a deck every second without repeating the same combination, it would take over 300 trillion trillion trillion trillion years to go through all of possible arrangements.

Now instead of 52 cards in a deck, the average human brain has roughly 86 billion neurons, each with about 7,000 synaptic connections to another neuron. So instead of 52 cards, we have hundreds of trillions of shuffle-able neural synapses that makes up our brain's "deck."

Mathematically, the number of possible combinations is more than 10 followed by 5 trillion zeros. In comparison, the number of atoms in the entire known universe is 10 multiplied by itself 80 times.

It is virtually impossible to examine all these combinations one by one -- there needs to be a way to extract meaningful parameters from this incredibly complex system. Luckily, statistical physicists and mathematicians have long dealt with extremely complex systems, such as the diffusion of gas particles, or the propagation of electrons. The study of statistical mechanics specializes in the quantification of complex systems using well defined parameters.

Human brains versus computers

"One of the key findings over the past decade or so, from my study of information systems, is that they tend to pursue states that maximize their future freedom of action," said Alexander Wissner-Gross, a physicist and computer scientist affiliated with Harvard University and MIT, both in Cambridge, Massachusetts.

A computer is less responsive when it is sleeping or maxed out in resources, and more responsive during moderate use. While this is intuitive and can easily be proven for a computer -- how does this compare to a human brain? After all, there is no "Task Manager" program for the human brain.

"It is natural to ask the question of what should a human brain look like if it is also trying to do the same thing," Wissner-Gross added.

Understanding entropy

Currently, researchers looking at consciousness typically use "a black box approach, in the sense that you have some signal from the brain and then try to guess how conscious is the subject," explained Ramon Erra, the main author of a research paper written by the Descartes group and accepted to be published in the journal Physical Review E. "Here instead we have a way to interpret those signals based on something we understand, which is information entropy."

Unlike physical entropy, which describes the disorder of a physical system, such as how a drop of ink disperses in a pool of water, the concept of entropy can also be used as a mathematical model to describe the disorder of an information system.

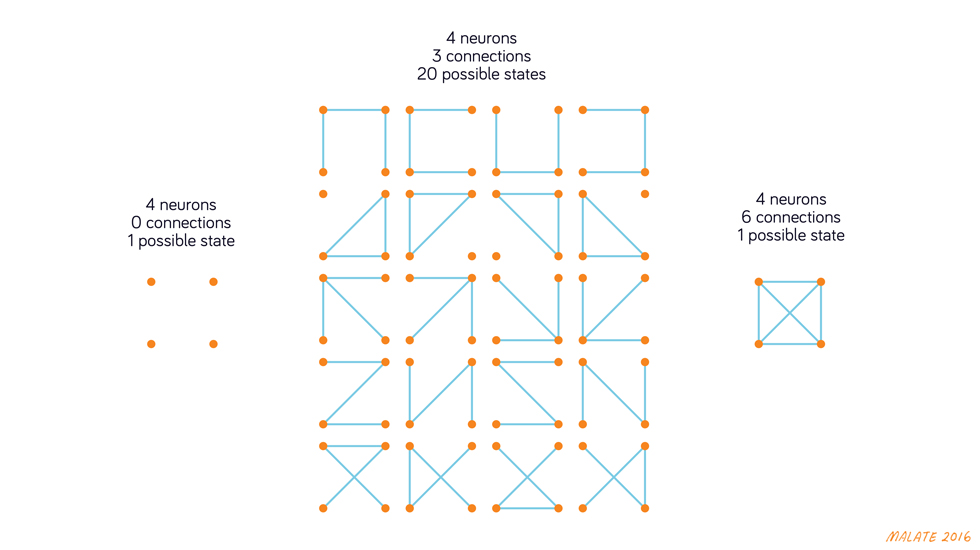

This is perhaps best explained by a diagram. Let's look at an extremely simplified version of the human brain -- one with just 4 cells, represented by the orange dots, each with 3 possible connections, represented by the blue lines.

As illustrated by the diagram, there is only 1 possible way to have 0 connections, and also only 1 possible way to have 6 connections. But for an intermediate state, such as with 3 connections, the system is much more flexible, or in the language of statistical mechanics, contain more entropy.

As mentioned earlier, because the human brain is incredibly complex, it is impossible to study all these connections one by one. However, by using statistical mechanics to boil down the details to a single metric -- the amount of entropy in the brain -- scientists can begin to unravel the complex mechanisms of the human brain.

Entropy in the human brain

Erra's group claimed to be the first to obtain clinical data that supports the hypothesis that entropy and consciousness are closely correlated. By monitoring the brain activity of healthy individuals as well as people with epilepsy, Erra's group recorded brain activity of individuals when they are sleeping, awake, and also during episodes of epileptic seizure.

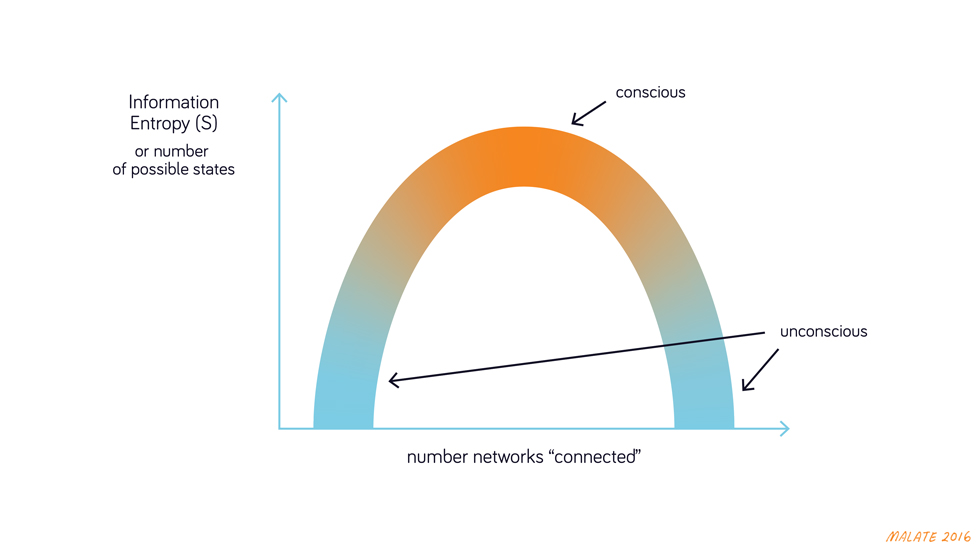

As expected, there is minimal activity during dreamless sleep, intermediate activity during a conscious state, and abundant activity during a seizure. While most of this was already known, their breakthrough was the ability to interpret these signals.

As seen from the diagram above, minimal and maximal activities during the sleeping and seizure states contain much lower entropy than intermediate activities during a conscious state.

By using the concept of entropy, the researchers were able provide context and a relatively well-defined metric to measure consciousness.

"In a clinical setting you want to have a device that can tell you if a person is conscious or not. This is a big deal because right now it's not that easy to tell how conscious a patient actually is -- such as how much pain are they feeling during operations," said Erra. "There are also other practical questions, such as how to make a device that can monitor comas and seizures."

The ability to measure and define consciousness is not only a scientific curiosity, but also one with immense practical implications, such as discerning whether a person is actually conscious while their appearance suggests a coma, or even feeling pain while under anesthesia. It is an ongoing challenge to understand awareness and consciousness. There have been other methods used to monitor awareness, but the concept of applying the theory of entropy to understand the human brain is relatively new. Scientists have since used the concept to study many neurological conditions, from ADHD to the aging of the human brain.

Scientists and doctors already use many methods to monitor a patient's brain activity. Among which the most popular one is electroencephalography, or better known as EEG, where the patient wears a cap with a few dozen electrodes that use conductive gel to pick up the tiny electric signals emitted by the brain.

If the link between consciousness and entropy proves to be robust, the implementation of the method will be relatively cheap, since it will mostly depend on software instead of expensive new hardware.

The human brain is incredibly complex, but with this new discovery, scientists may be beginning to wrap their heads around it.