Why Do We Need Super Accurate Atomic Clocks?

(Inside Science) -- The GPS receiver in your car or cellphone works by listening to satellites broadcast their time and location. Once the receiver has "acquired" four satellites, it can calculate its own position by comparing the signals. Since the signals are broadcast using microwaves that travel at the speed of light, an error of a millionth of a second on a GPS satellite clock could put you a quarter mile off course.

Luckily, the atomic clocks on GPS satellites, due to their incredible stability and regular synchronization, maintain an error of less than 1 billionth of a second.

Today, the best clocks scientists are working on can do even better -- more than a million times better by some measures. These absurdly good clocks may enable new applications as unimaginable as GPS once was, ranging from predicting earthquakes to discovering entirely new physics.

Yet not all high-performance clocks are equal -- there are a range of designs, and some state-of-the-art clocks are better suited to particular applications than others. To understand why -- and to understand the performance of a clock more generally -- we first need to understand two basic concepts in statistics: precision and accuracy.

Arrows and clock ticks

Imagine an archer who has shot ten arrows. In this scenario, precision is a measurement of the arrows' positions relative to each other and accuracy is a measurement of their positions relative to the bullseye. A precise archer isn't necessarily an accurate one, and vice versa.

The precision of an archer is analogous to a concept called clock stability. If one thinks of each tick of the clock as a shot and hitting the bullseye as keeping the exact right time between every tick, then a precise but not accurate clock would consistently tick either slower or faster than the desired amount of time. On the other hand, an accurate but imprecise clock would tick sometimes faster and sometimes slower, but the accumulated errors would average out somewhat over time.

"There's a lot of applications [for clocks] that only need really good stability, and then there are a range of applications where just stability is not enough, and you also need accuracy," said Andrew Ludlow, a physicist from the National Institute of Standards and Technology in Boulder, Colorado.

Telecommunication and navigational systems generally require stable clocks, but they don't have to be highly accurate, he said. On the other hand, atomic clocks that physicists use to define a second need to be really accurate too.

A natural fuzziness

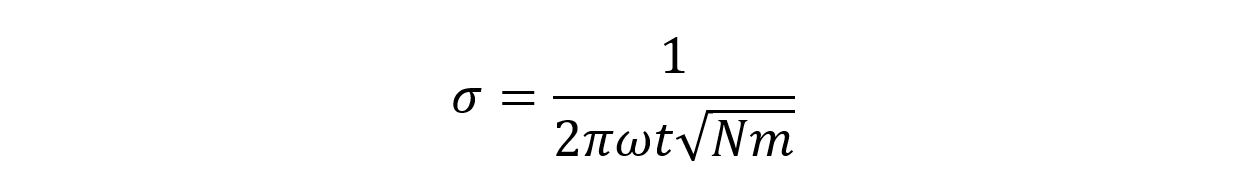

Currently, the stability of clocks is generally limited by experimental hang ups, such as laser technologies in optical clocks. But let's say we can build a clock free of technological limitations, there is still going to be a fundamental instability associated with the clock, bound by the laws of quantum physics, given by this equation.

On the left-hand side, we have the stability, which is unit free, as in a σ value of 0.1 would mean an uncertainty of ten percent for your measurement. This stability is determined by the parameters on the right-side, as described below.

- ω: the "ticking" frequency of the timekeeping source measured in cycles per second, or hertz (Hz). For a cesium-133 atom that gives out radiation with 9,192,631,770 cycles every second, the number would be 9,192,631,770 Hz;

- N: the number of "timekeepers," for example the total number of cesium atoms used by the clock;

- t: the cycle time, which is the length of each measurement for a predetermined number of "ticks" depending on the design of the clock. For example, if a clock is designed to register a data point every second, then t is simply 1 second.

- m: the total number of measurements during the experiment. For example, if the length of the experiment is a minute, and the clock is registering a data point every second, then m will be 60.

[Note: In reality, physical clocks need time to perform operations between each measurement, so m is usually less than (length of experiment/t). For example, if the clock needs a second to "reset" after every individual measurement, m would be 30 instead of 60 in our example. But in order to keep the following exercise simple, we will assume zero for this down time between measurements.]

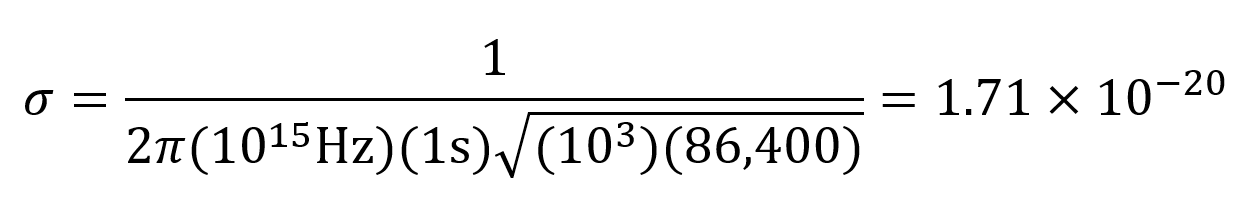

Now, let's test this out with some numbers. For a clock that keeps time by measuring a quantum phenomenon that occurs one thousand trillion times every second, ω would be 1015 Hz, and if it counts for one second every time it probes for the phenomenon, then t would be 1 second. For N we can assume the value of 1,000, and for m we can use 86,400, the total number of seconds in a day.

For a day long measurement, the stability related uncertainty of our theoretical clock would be (1.71 x 10-20) x 86,400 s = 1.5 x 10-15 s, or 1.5 femtoseconds.

Since this natural fuzziness of the clock is directly linked to the design of the clock, one can in theory keep improving the stability by making the denominator as big as possible. This can be done by choosing to measure a natural phenomenon that occurs at a super high and regular frequency, leading to a larger ω, or to measure more sources simultaneously, leading to a larger N.

Each of these choices presents its own unique technological challenges, which sometimes bring you at odds with the other devil in the detail -- accuracy.

Unlike the universal equation for calculating the level of quantum noise dictating a clock's stability, a clock's accuracy -- or in other words how close its ticking rate matches expectations -- can be affected by an endless list of interactions with its environment.

"Magnetic fields and electric fields, for example, can perturb the ticking rate of the clock, but the effect depends on the details of the clock," said Ludlow. "We can come up with models to try to understand how they impact the clocks, but they're not universal in any way."

The barrage of external factors that can make a super sensitive clock drift faster or slower over time may, at first glance, seem like a nuisance. But if we can understand these effects well enough, they actually hold the key to whole new worlds of applications.

One man's inaccurate clock is another man's treasure

Travelling at roughly 8,700 mph across our sky, GPS satellites move fast enough for Einstein's theory of special relativity to have a noticeable effect on their clocks, slowing them by 7 microseconds each day.

However, because they travel at an altitude of more than 12,000 miles, the lower gravity experienced by GPS satellites also cause the clocks to speed up 45 microseconds every day, as predicted by, you guessed it, Einstein again. This time by his theory of general relativity.

Lo and behold, compared to clocks on Earth, the clocks aboard GPS satellites indeed speed up by (45 – 7) = 38 microseconds. Every. Single. Day.

Since these clocks are good enough for us to consider the effects of external factors such as a change in gravity, we can use them to measure these effects -- just like how professional archers can tell which way the wind was blowing by looking at where their arrows landed.

For example, a network of super stable clocks should be able to detect gravitational waves at frequencies inaccessible to laser interferometers, currently the only instrument sensitive enough to these tiny ripples through space time. A clock with a stability of 10-20 would be able to give the planned space based gravitational detectors a run for their money. A high performance clock may also be able to sense tiny gravitational changes deep underground that signal conditions ripe for an earthquake or volcanic eruption.

Scientists are already using these super stable and accurate clocks to search for entirely new physics. For instance, they are testing if fundamental constants are indeed constant, and are providing new avenues to investigate the decades-long puzzle of dark matter and dark energy.

[Yuen Yiu would like to thank Andrew Ludlow and David Leibrandt of the National Institute of Standards and Technology for conversations that inspired this article.]

Editor's note (September 12, 2019): This story has been edited to correct the location of the NIST office where Andrew Ludlow works.